- Nvidia cuda toolkit mem check driver#

- Nvidia cuda toolkit mem check software#

- Nvidia cuda toolkit mem check code#

- Nvidia cuda toolkit mem check Pc#

- Nvidia cuda toolkit mem check license#

After that trial period (usually 15 to 90 days) the user can decide whether to buy the software or not. Trial software allows the user to evaluate the software for a limited amount of time. Demos are usually not time-limited (like Trial software) but the functionality is limited.

Nvidia cuda toolkit mem check license#

In some cases, all the functionality is disabled until the license is purchased. Demoĭemo programs have a limited functionality for free, but charge for an advanced set of features or for the removal of advertisements from the program's interfaces. In some cases, ads may be show to the users. Basically, a product is offered Free to Play (Freemium) and the user can decide if he wants to pay the money (Premium) for additional features, services, virtual or physical goods that expand the functionality of the game. This license is commonly used for video games and it allows users to download and play the game for free.

There are many different open source licenses but they all must comply with the Open Source Definition - in brief: the software can be freely used, modified and shared. Programs released under this license can be used at no cost for both personal and commercial purposes.

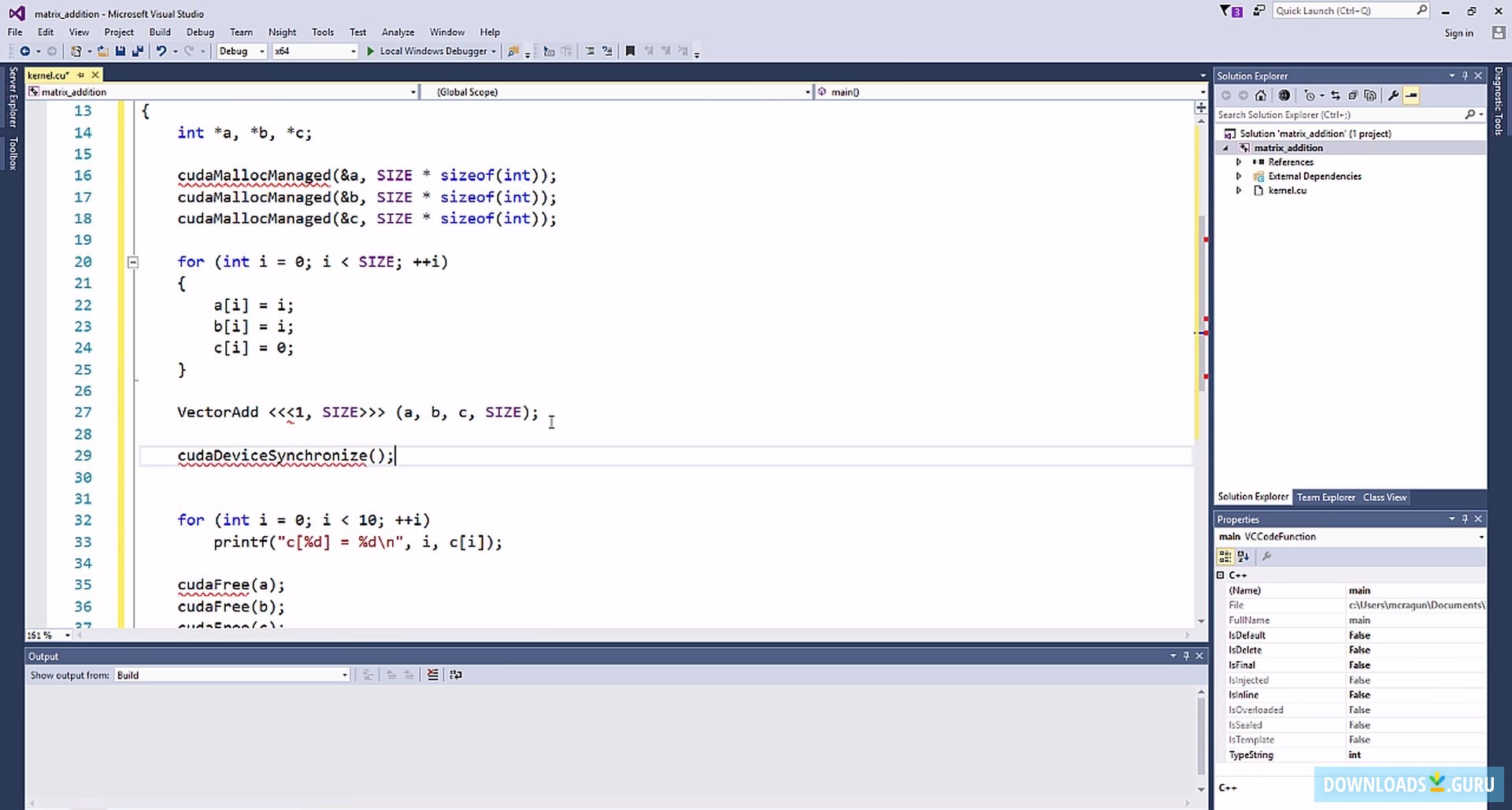

Nvidia cuda toolkit mem check code#

Open Source software is software with source code that anyone can inspect, modify or enhance. Freeware products can be used free of charge for both personal and professional (commercial use). host mem transfer type: Specifies whether a memory transfer uses "Pageable" or "Page-locked" memoryĪlso Available: Download NVIDIA CUDA Toolkit for Macįreeware programs can be downloaded used free of charge and without any time limitations.mem transfer size: Memory transfer size in bytes.reg per thread: Number of registers per thread.sta smem per block: Static shared memory size per block in bytes.dyn smem per block: Dynamic shared memory size per block in bytes.block size: Number of threads in a block along X, Y, and Z dimensions is shown as ] in a single column.grid size: Number of blocks in the grid along the X, Y, and Z dimensions are shown as in a single column.Profiler counters: Refer the profiler counters section for a list of counters supported.Occupancy: Occupancy is the ratio of the number of active warps per multiprocessor to the maximum number of active warps.Stream Id: Identification number for the stream.Asynchronous memory copy requests in different streams are non-blocking But if any profiler counters are enabled kernel launches are blocking. All kernel launches by default are non-blocking.

Nvidia cuda toolkit mem check driver#

At driver generated data level, CPU Time is only CPU overhead to launch the Method for non-blocking Methods for blocking methods it is a sum of GPU time and CPU overhead. CPU Time: It is the sum of GPU time and CPU overhead to launch that Method.GPU Time: It is the execution time for the method on GPU."memcpyDToHasync" means an asynchronous transfer from Device memory to Host memory Memory copies have a suffix that describes the type of a memory transfer, e.g. This is either "memcpy*" for memory copies or the name of a GPU kernel.

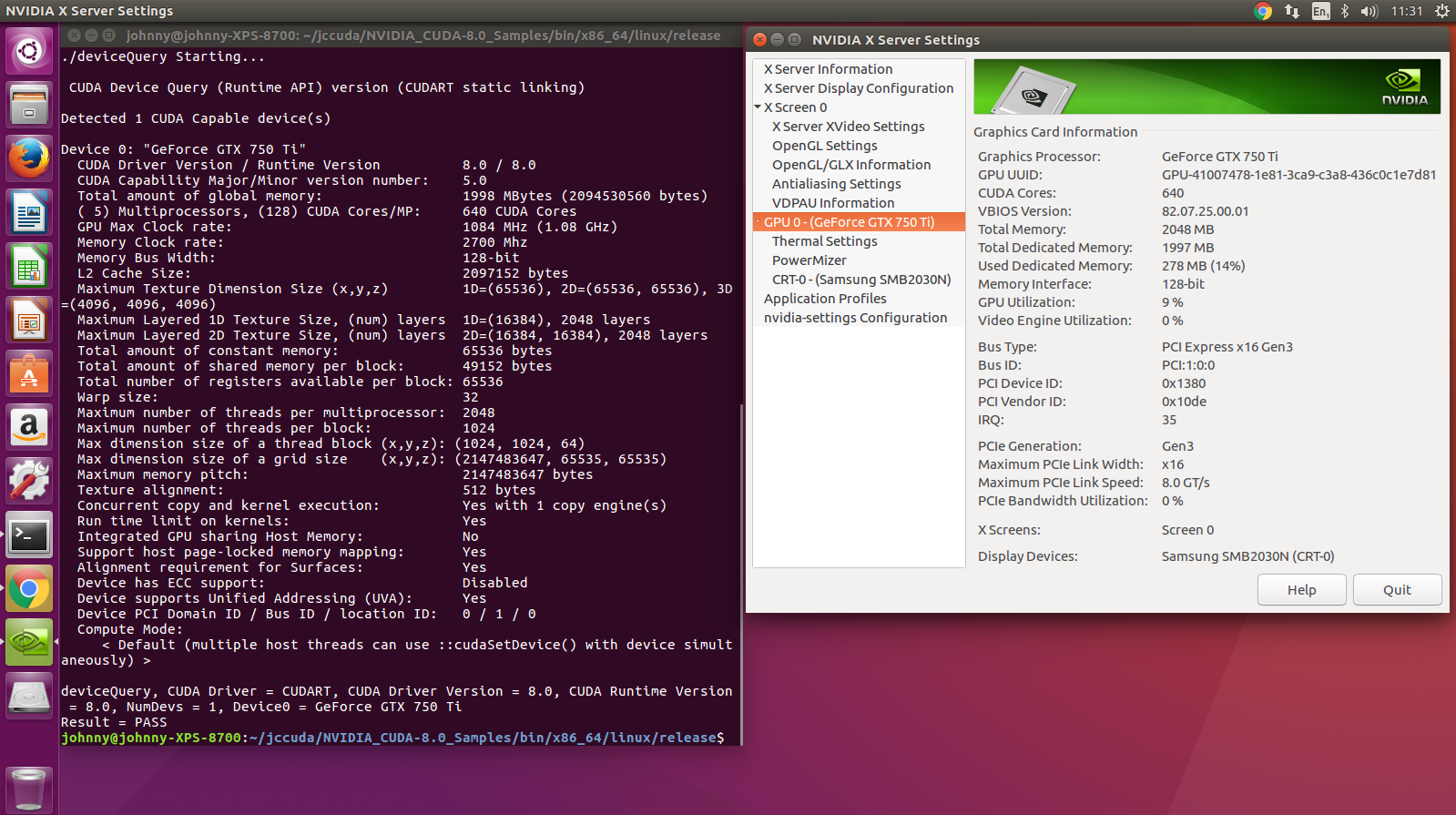

Nvidia cuda toolkit mem check Pc#

Download NVIDIA CUDA Toolkit for PC today! To get started, browse through online getting started resources, optimization guides, illustrative examples, and collaborate with the rapidly growing developer community. Develop applications using a programming language you already know, including C, C++, Fortran, and Python. IDE with graphical and command-line tools for debugging, identifying performance bottlenecks on the GPU and CPU, and providing context-sensitive optimization guidance. Using built-in capabilities for distributing computations across multi-GPU configurations, scientists and researchers can develop applications that scale from single GPU workstations to cloud installations with thousands of GPUs. Your CUDA applications can be deployed across all NVIDIA GPU families available on-premise and on GPU instances in the cloud. For developing custom algorithms, you can use available integrations with commonly used languages and numerical packages as well as well-published development APIs. GPU-accelerated CUDA libraries enable drop-in acceleration across multiple domains such as linear algebra, image and video processing, deep learning, and graph analytics.

The toolkit includes GPU-accelerated libraries, debugging and optimization tools, a C/C++ compiler, and a runtime library to deploy your application. With the CUDA Toolkit, you can develop, optimize, and deploy your applications on GPU-accelerated embedded systems, desktop workstations, enterprise data centers, cloud-based platforms, and HPC supercomputers.

NVIDIA CUDA Toolkit provides a development environment for creating high-performance GPU-accelerated applications.